Microsoft Certified Data Analyst DA-100 Exam

Episode 64

A Data Analyst enables a business to maximize the value of their data assets. Data Analysts are responsible for designing and building data models, cleaning data, and transforming data, enabling the organization to draw meaningful business value and insights from their data. In order to get the Microsoft Certified Data Analyst certification one must get a score of 700 or higher on the DA-100 exam. It is without question that the Power BI platform with its native integration in the rest of the Microsoft ecosystem makes it the clear leader in the business intelligence solution space. I have always been a data nerd and am grateful for MERAK supporting me in becoming an expert in Power BI and acquiring the certification. This blog will cover my motivations for the certification, experience taking the test remotely, and resources used to prepare for the exam.

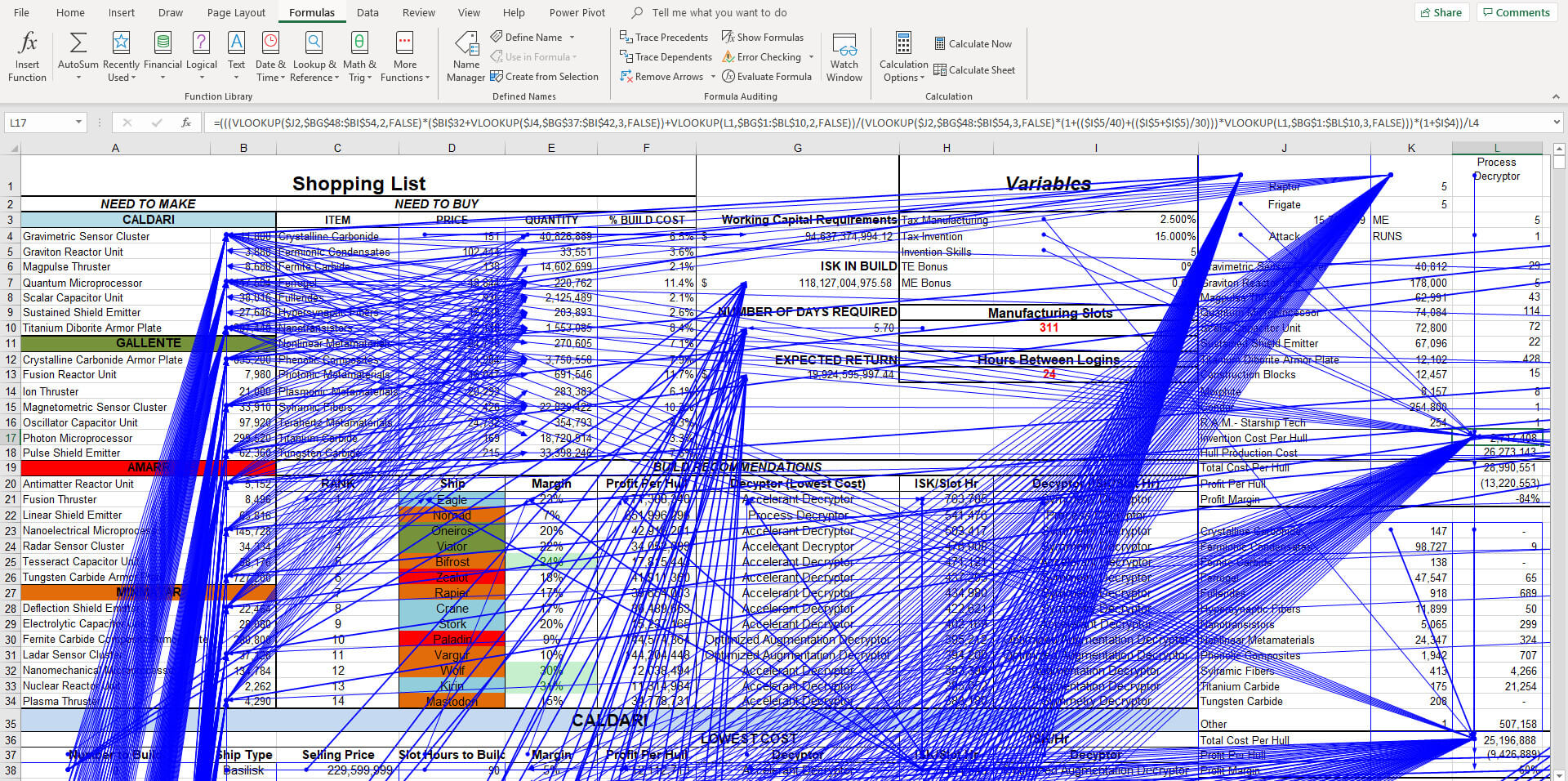

Early in my career, coming from a STEM background, Excel was the analytical multi-tool capable of solving many problems. Demonstrable Excel skills were considered valuable and a potential differentiator when I had little relevant work experience. I used Excel to build financial models, facilitate project and operational planning, perform cost analysis, and data capture. Until I discovered Power BI, it was the go-to application if any numerical or organization problem expanded past the napkin.

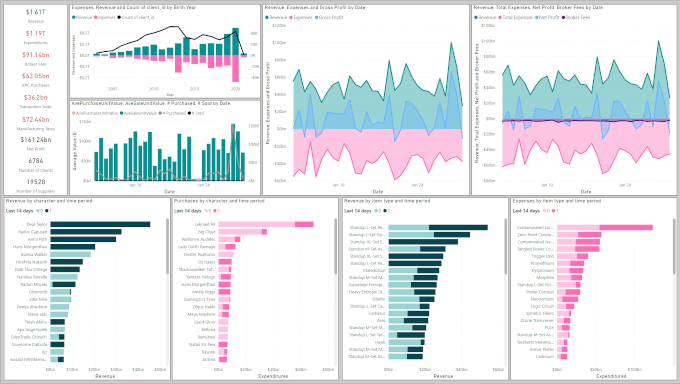

Until I discovered Power Query to solve a problem with parsing JSON files, I struggled with the internal narrative that “I was not a coder”. Using the Power Query editor broke down the barrier that allowed me to develop my skills beyond Excel formulas. Prior to joining MERAK, I managed a complex set of spreadsheets that provided ERP and CRM functionality in a video game (EVE Online) that I have maintained for a little over a decade now. At the time, I felt confident in my skills, only to have them shattered after attending a Microsoft Ignite Power Query talk. It was a really humbling experience to be shown how much I did not know in 45 minutes. Combined with the kind mockery from my MERAK colleagues pointing out that using Excel as a database is not a great idea, I set off a major rebuild and a migration into SQL starting a little over two years ago. The transition would not have been possible without support on initial setup and occasional troubleshooting.

There are a couple challenges that come with self-learning. The first is that when you solve a problem for the first time, it is likely not the right or best way, so it is very important to revisit old work and correct it when you know better. This can be very time consuming. Another challenge is that I had no idea when I crossed the lines between being a beginner, competent, knowledgeable, and or an expert (I feel mastery is not possible for a platform that is so large and changes so rapidly). Building business intelligence solutions typically involves working with confidential data, making communication of competency challenging and not everyone will take a custom application built to facilitate activities on a video game seriously. Therefore, the certification both benchmarked my status in knowledge and better communicated my competency externally.

The certification process is going to be different for everyone based on how much seat time they have had with the application. If you have been regularly using the application for a year, and have gone from source data to published reports/dashboards/apps, passing the exam should not be too challenging. The content depth is roughly the equivalent of an undergraduate university course.

It is my opinion that with just course preparation material, it would be difficult to get the certification as there is a fair amount of nuance that needs to be understood when creating a data model. Likewise, first hand experience sharing and administrating reports is very useful, and hard to do if learning on your own; conceptually the topics are not difficult. If you learn through doing like me, internalizing the knowledge without a project to test it on would be challenging.

I already had a complex data ecosystem to work with, but if there is not a clear problem, my advice would be to find a public data source and start building for an audience. Public COVID, economic, weather, or financial data are some sources where rich models could be developed. It is important to be comfortable with M Code and DAX, but you do not need to be a deep expert. If you can make some modifications to M code without the UI and read the overall structure, aggregate with filters, and manipulate dates using DAX, you should have enough background knowledge.

I want to end this blog with my experience writing the exam remotely through Pearson VUE. I feel very fortunate that the remote exam option is available, probably one of the bright spots thanks to COVID. The exam location would have been an out-of-town trip likely doubling the amount of time away to write the exam. They have a very strict protocol to limit the possibility of cheating. Video and audio are recorded, single monitor, and no headsets allowed. For my home office, I primarily use a wireless headset, so I had to use my recording microphone and physically disconnect the extra monitors. Also, no talking to yourself during the exam. You photograph your workspace beforehand, and then verify over video that you have made the appropriate changes. As I had everything on my workstation wrapped up, I needed to untie most cables to give my webcam enough flexibility to scan the entire room. Save yourself the trouble, if you can, and use a laptop in an empty room. Aside from the initial setup, the experience was awesome: instant results and no long drive back home. I will definitely do future exams remotely.

Useful DA-100 Resources

- Book: Analyzing Data with Microsoft Power BI Exam Ref DA-100 by Danil Maslyuk

- Practice Exam Questions: Udemy Microsoft Power Bi DA100 Exam Advanced Practice Test 2021 by Muhammad Omar and Faseeha Matloob

- Practice Exam Questions: www.examtopics.com