Episode 58

It is inevitable that data will be lost through a hardware failure, mistaken deletion, power failure, natural disaster, or malicious actions. To mitigate such events, it is common practice to make copies of our data just in case we need to restore what was lost. While appearing simple at the surface, developing, and acting on a backup policy has many elements to consider, whose selection criteria is unique to the organization. This blog is going to briefly cover considerations when backing up data to the cloud.

Increasingly, organizations seek to build a data driven culture; as a result, more data is being retained and analyzed, especially as bottom-up methodologies, facilitated with machine learning, gain popularity. It feels safer to keep all data and let the model training process or the data junkie decide what is valuable. As data quantities scale exponentially so do data bandwidth and processing requirements. No business model can sustain indefinitely exponentially growing system requirements, and eventually actions will need to be taken to add constraints to the system.

Not all data are the same, nor should they be backed up in the same way. Data could be categorized by file type, size, timeliness, and classification. File types could be text files, application documents, images, audio, and video. Size could range from 100s of bits to exa-bytes. Timeliness is the estimate of the useful life of the data and how frequently it will need to be accessed. Lastly, classification could be public, internal, confidential, and restricted. With this simplified model, we can categorize data and see the need for differentiated backup policies.

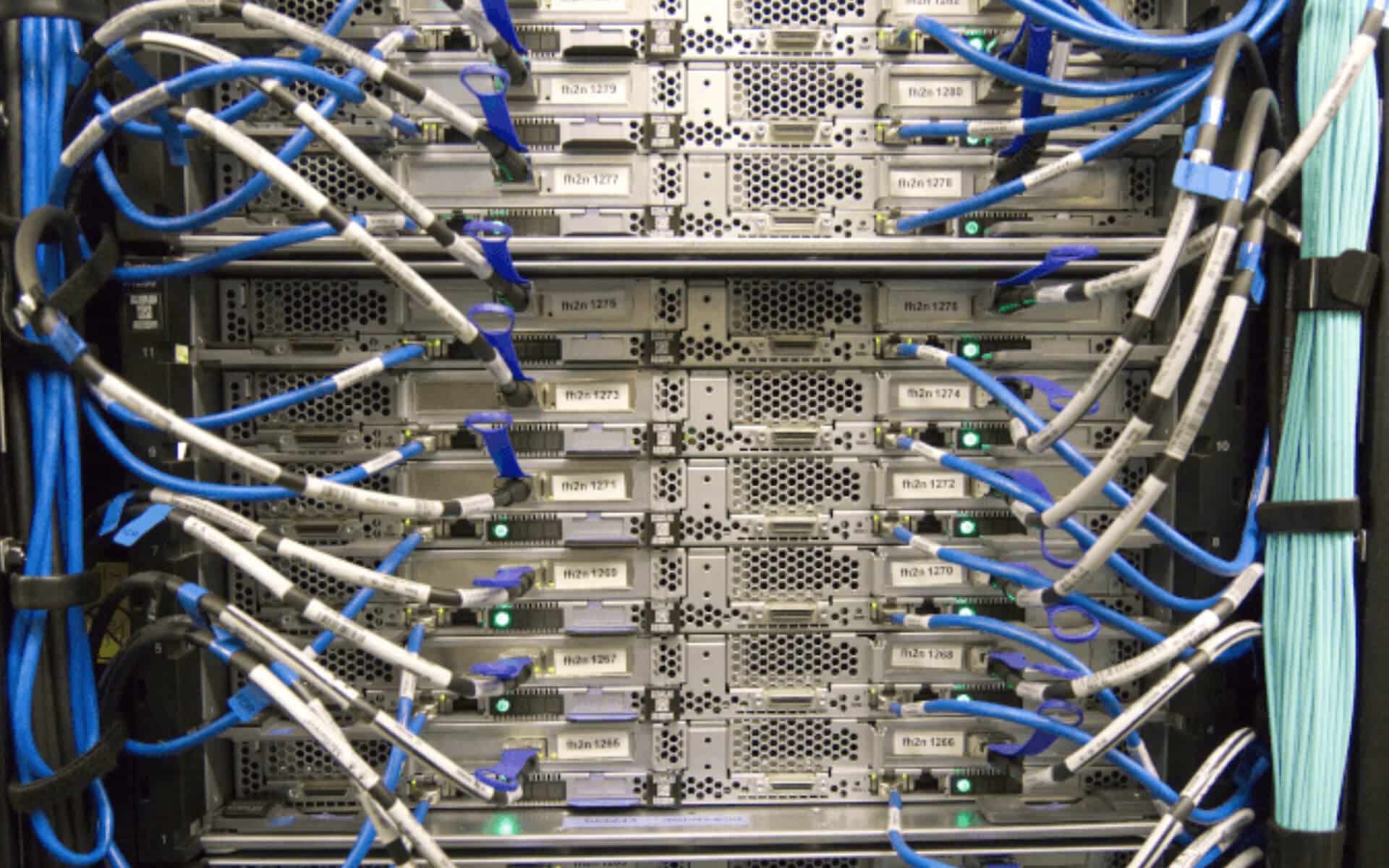

With a model in hand to categorize the data, let’s now look at the considerations on cost and recovery. Cloud technologies feel so ethereal that one may believe that the data is really in the clouds, but the information is physically stored in a datacenter somewhere. That somewhere might have implications for organizations with contractual or regulatory requirements that demand that data is retained in the country/jurisdiction of origin. Likewise, that physical location may experience a natural disaster and if that datacenter is the location for all your production and backup data, you are going to have a bad day.

While the common saying is that backup storage is cheap, this statement is only true under certain conditions. For instance, Google Drive and OneDrive are free to a point. Costs can begin to add up if backup policies are not optimized for their storage requirements. To help determine data storage requirements ask: What data needs to be stored in the cloud? What is the backup schedule: daily, weekly, monthly? How many copies are retained? When are they deleted? What flexibility is required when restoring lost data (single file, single machine, single server, entire environments)?. Should backup copies be stored in multiple regions or with multiple cloud providers? Hopefully now it is easier to see that costs can balloon out of control if data is retained too long, excessive copies are created, multiple cloud providers are used in multiple regions, or archived data is required to be accessed frequently.

Any conversation regarding digital technology goes hand in hand with security. Cloud providers spend billions of dollars on security research each year. Due to their scale, it would be impossible for individual organizations to match the sophistication and expertise of these providers. However, it would be foolish to assume that your data is 100% safe because it is in the cloud. Security is a shared responsibility between the cloud provider and its users. All the security research in the world will not keep an organization’s data safe if the administrator’s access credentials are compromised. It is extremely important that backup access and permissions are restricted to the minimum number of individuals. Lastly, disaster recovery is an event that one would hope to never have to experience, and due to the infrequent nature of such events, the practice of recovery can be very inefficient. This is where planning for the occasional “disaster day” could pay huge dividends by learning from mistakes in practice environments.

In short, the cloud provides an extremely robust platform for your organization to secure its data against disaster events. At the same time, excessive redundancy, weak data management, or poor security practices can quickly erode the competitive advantage provided by the cloud.

Last Updated: June 25, 2025